Language

You can read the magazine in one of the following languages

Geolocation

You can read the global content or the content from your region

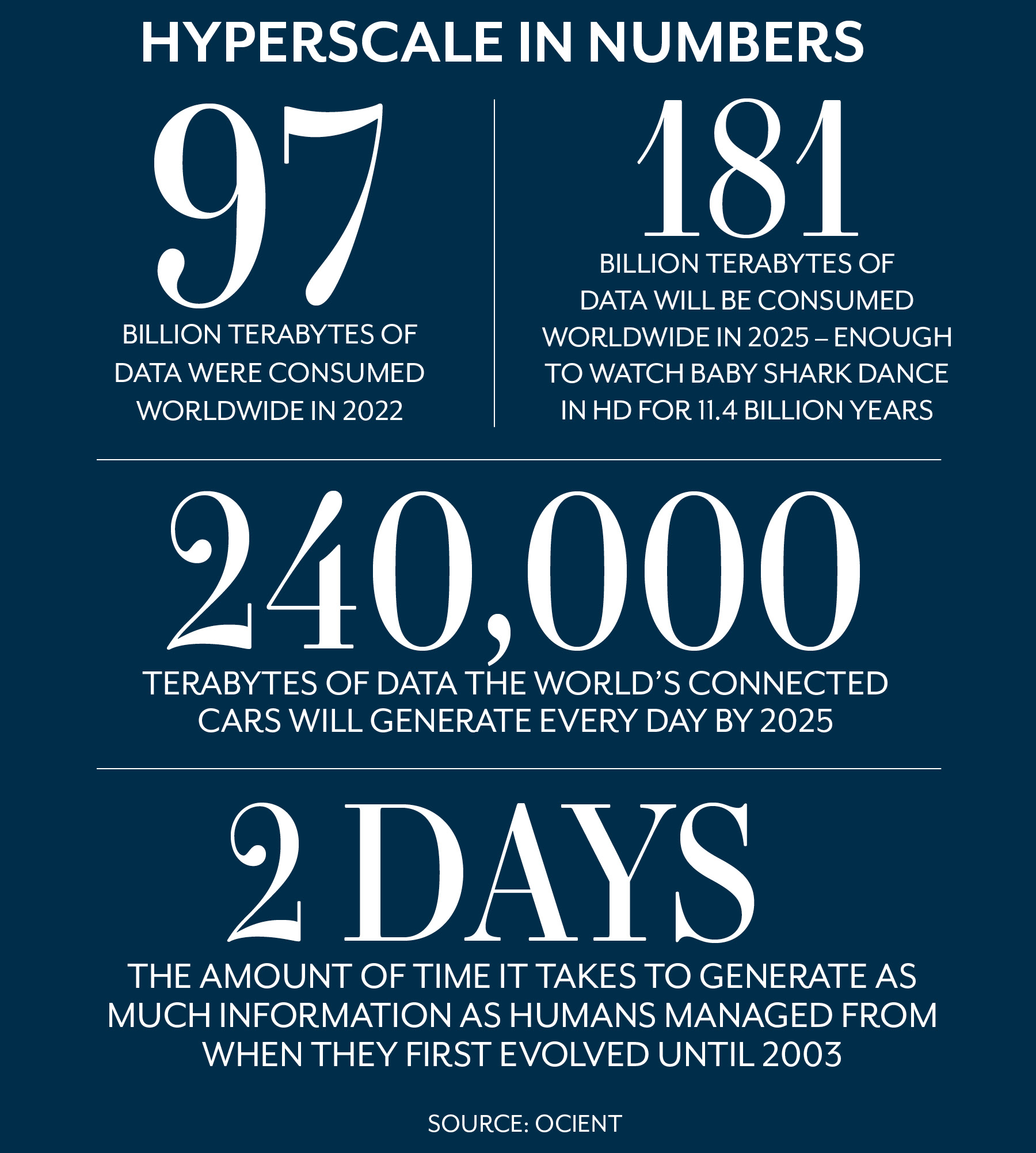

The sheer scale of data being generated is almost beyond comprehension. Whether you measure it in gigabytes, terabytes or other, ever-bigger byte-suffixed terms, all you need to know is that there’s a lot – and it’s doubling every 1.2 years. In fact, 90 percent of all the data ever produced by humans has been created since the pandemic began in 2020.

Gone are the days when ‘Big Data’ was considered big. These days, the information tsunami sweeping around the planet is referred to as the ‘Hyperscale Revolution’.

Data is everywhere.

In the morning, your smart toaster will use complex algorithms to achieve the precise shade of brown to suit your taste using the least amount of power. While it’s doing so, your ‘enabled egg tray’ (yes, such a thing exists) has synced with your phone and confirmed you have four eggs left and each is still fresh.

Meanwhile your voice-activated iKettle has anticipated that tea-making is imminent and boiled just enough water for your mug.

Simply by having fried egg on toast and a morning cuppa, you’ve generated a few thousand kilobytes of data – and that’s before your smart car starts churning out 25 megabytes per hour as you drive to work past several hundred CCTV cameras, all duly noting your presence.

For business, hyperscale opens up a dizzying – and overwhelming – array of possibilities including ultra-customized marketing, product enhancement and customer mapping. Sophisticated machine learning applications can run millions of simulations to optimize efficiencies and track consumer acquisitions, testing apparent correlations to establish causation and generating optimized strategy suggestions.

So investing in a suite of data analytics gizmos to turbocharge your company is a no-brainer. However, that doesn’t mean it’s a slam dunk, as choosing the wrong one could give you the corporate equivalent of burnt toast.

And if you can’t filter out unhelpful information, trying to make sense of it is akin to drinking from a firehose.

In other words, it’s not how much you know, it’s how skilled you are at processing it into knowledge and then distilling it down further into useful intelligence.

And it’s very important that you do, as the ability to rapidly analyze large datasets is directly linked with business success, according to a major 2022 survey of business leaders by data specialists Ocient.

“The world is moving beyond big data,” the survey concluded. “Not only will tomorrow’s biggest businesses be collecting and storing trillions of rows of data, they will need to perform continuous, complex analysis on those hyperscale datasets, using technology to run thousands or even millions of queries every hour, around the clock.”

A key success metric for companies pursuing a data-driven future is realizing that not all data is equal, according to Viktor Mayer-Schoenberger, Professor of Internet Governance and Regulation at Oxford University.

“Conventional thinking has it that we need to collect lots of data from many different sources or individuals to capture reality and make good predictions,” he tells The CEO Magazine. “But that’s still way too focused on the average situation.”

The author of the influential book Big Data: A Revolution That Will Transform How We Live, Work, and Think predicts that, in future, businesses will need to avoid the temptation to generalize.

“We’ll need to collect data from a specific individual and use that locally to provide predictions to that individual. In other words, data analysis gets hyper-local and hyper-contextual, leading to better predictions and decisions.”

So which companies are most successfully finding creative new ways to harness data at hyperscale, and what can we learn from their endeavors? The following have all led the pack in constructing unique, data-driven models that have gifted them crucial competitive advantages and pushed the boundaries of what’s possible.

As business leaders woke up to the almost unlimited potential of AI, data analytics – once the preserve of pallid accounts staff in gray, windowless offices – suddenly became sexy, and nowhere more so than the sports behemoth.

Information technologists at its headquarters in Gurugram near Delhi, for example, enjoy an ergonomically designed workspace with intelligent spatial design, respite areas, punching bags and ‘avenues of mobility’.

It’s therefore no surprise that Adidas was quick off the blocks when it came to embracing data-driven performance, both on and off the track, pledging to invest US$1 billion in research and digitalization in the next two years.

Key to that will be doing the complete opposite to many corporations: decentralizing its information warehousing and spinning it into a ‘data mesh’ where each domain has ownership.

“Adidas has been successful in democratizing access to data,” the firm’s Director of Platform Engineering Javier Pelayo said. “This is just the beginning of a long journey. The first priority is detecting and tackling bottlenecks that jeopardize the autonomy of different units.”

Pelayo also advocates for the use of ‘data lakes’ – different to warehouses or databases as they contain raw, historical data for richer, more contextualized analysis.

The cloud software giant launched its groundbreaking AI engine, Einstein, in 2016 to provide customer relationship management for pretty much any corporate need, updating and improving it every year since and even building an AI Ethics Maturity Model for ESG.

“We’re at the beginning of an AI transition from maximizing accuracy to the goals of inclusivity and responsibility,” Senior Vice President of the company’s ASEAN and ANZ operations Rowena Westphalen tells The CEO Magazine.

“These goals will become critical components of stakeholder capitalism and building trust, meaning businesses will be better placed to use AI safely, accurately and ethically.”

The British banking giant partnered with AI startup Simudyne to pioneer simulated banking situations using its own transactional data, allowing it to adapt machine learning into sophisticated cognitive reasoning that can be used for natural language processing (NLP) – a way of interpreting customer voices or written messages using contextual clues in the same way a human does.

IBM first pondered the concept of computer learning in the 1950s, but it was a stunt in 2011 that put it front of mind in the highly competitive space of AI question answering.

IBM Watson had been developed as an industry-best automated reasoning and NLP tool, and was pitted against the two highest-ever scoring contestants in the TV game show Jeopardy. Amid a media frenzy, it trounced them both, demonstrating a previously unseen ability to process spoken language and construct responses.

It even led to philosophical debate on whether Watson was capable of actual thinking. Either way, it was soon able to process data at hyperscale and, before long, was being used by three-quarters of global banking institutions while making sizable inroads into other sectors such as fashion, weather forecasting and health care.

By 2022, it was delivering more than US$1 billion dollars in revenue to IBM but, as rivals muscled in on the space, it was still struggling to make much of a profit, ironically putting its future in jeopardy.

An Australia financial services company found new ways to use AI via Google Cloud to radically reduce the cost of financial advice and the resources needed for compliance to exploit the vacuum that was left when Australia’s major banks withdrew from wealth management four years ago.

Had said banks been as canny with their data as HUB24, they probably wouldn’t have incurred such eye-watering loses.

“The platform of the future is about using data and technology to power transformational business solutions, [and it’s] driving our wave of innovation and development. Google Cloud is a key component,” Head of Innovation Craig Apps said.

The Hong Kong futurist company runs the world’s largest AI platform, and has recently applied its proprietary facial recognition software to detecting defects in the car components sector.

It has also led the field in decision intelligence, content enhancement and data protection, and rolled out a successful initial public offering in 2022, despite being blacklisted by the United States government regarding security concerns and scrutiny over its alleged role in surveillance of Uyghurs.

Shares have fallen sharply since, but the company continues to invest heavily in AI technologies to achieve carbon neutrality in emerging smart cities.

While the business value of Mark Zuckerberg’s passion project, the metaverse, is still questionable, Facebook’s parent company is ploughing unparalleled funds into new data technologies in the actual world to conjure ever-more-sophisticated customer profiles for targeted marketing that others could only dream of.

However, even with more personal customer data than any other entity, it still needs more to help train its machine learning algorithms to spot potentially harmful content.

It admitted that it simply didn’t have enough reference points to allow its intelligence systems to quickly remove harmful videos such as the 2019 Christchurch terror attacks or to deal with the increasing volume of misinformation on the pandemic or politics.

But few other companies are pushing the deep learning limits quite so hard. In September last year, Zuckerberg posted a 20-second ‘Make-A-Video’ compilation of footage created entirely from short passages of text to demonstrate one of his AI projects.

He’s also developed automatically generated photo descriptions to help blind people ‘see’ images using neural networks that learn from processing billions of data points.

While there are numerous commercial lessons to be learned about how to exploit the Hyperscale Revolution, there are moral considerations too.

Like pondering when detailed customer mapping becomes a creepy exercise in virtual stalking.

For Barry Devlin – who published the very first data warehouse design in 1988 and has since become a global authority and bestselling author on the evolution beyond big data – the ethics of enhanced AI and ‘surveillance capitalism’ often get brushed aside in the rush for ever-more-targeted marketing.

“Many of the ways businesses and governments harness vast swathes of data is deeply inimical to humanity,” he tells The CEO Magazine.

“Advertising-funded social media data and AI usage is destroying individual lives and undermining societal trust to the extent that democracy is threatened and scientific facts are denied.

“Advertising-funded social media data and AI usage is destroying individual lives and undermining societal trust to the extent that democracy is threatened and scientific facts are denied.”

- Barry Devlin

“As algorithms consume your every click and corporations extract every last drop of advertising revenue from your past, present and future, you’re little more than the carcass of a life you once treasured as your own.”

His book, Business unIntelligence, has been hugely influential in the sphere of uncovering insights from hyperscale data, but he counsels business leaders not to crave increasingly vast amounts for their own sake.

“Big data was always more marketing hype than a real advance in business thinking,” he says. “Having more and more data is, in itself, of limited value and may even cause further problems for business and society.

“Better, broader information, with deep understanding of its context, as opposed to more data, is where global businesses can find real value.”

However, for every overachieving conglomerate gobbling up hyperscale data and exacting such value, there are plenty still mired in a swamp of antiquated hardware, dogmatic leadership and denial that they might be missing out.

For many, the stumbling block isn’t the information superhighway itself, it’s an issue closer to home.

A survey of C-suite executives by NewVantage Partners found that the main hurdle for nearly two-thirds was their people, with a third blaming ‘process’. Only 7.5 percent mentioned technology.

No wonder that LinkedIn rated AI engineering as the most in-demand skill of 2022, with analytics and data science not far behind.

‘Bigger data’ is now so all-pervasive that there are few jobs where it doesn’t play an increasing role. In every aspect of life, people have to be attuned to both its opportunities and dangers. Hyperscale should be on everyone’s radar.

Particularly if your enabled egg tray is conspiring with the kettle to steal your identity.

The war in Ukraine is being fought, at least partly, by rival AI platforms analyzing real-time data to devise drone strike strategy, misinformation campaigns, artillery targeting and intercepting enemy missiles.

Processing enormous amounts of intelligence from thousands of sources is at the heart of every aspect of the conflict, argues the Ukrainian army’s Head of Cyber Security and AI Development Pavlo Kryvenko.

“It now cannot be successfully resolved without the use of the latest weapons, modern intelligence, data transmission and destruction systems [containing] artificial intelligence, at least in part,” he said.

“AI processes huge amounts of data extremely quickly so it takes significantly less time to make decisions before or during operations. It’s also much easier to control troops and weapons.”